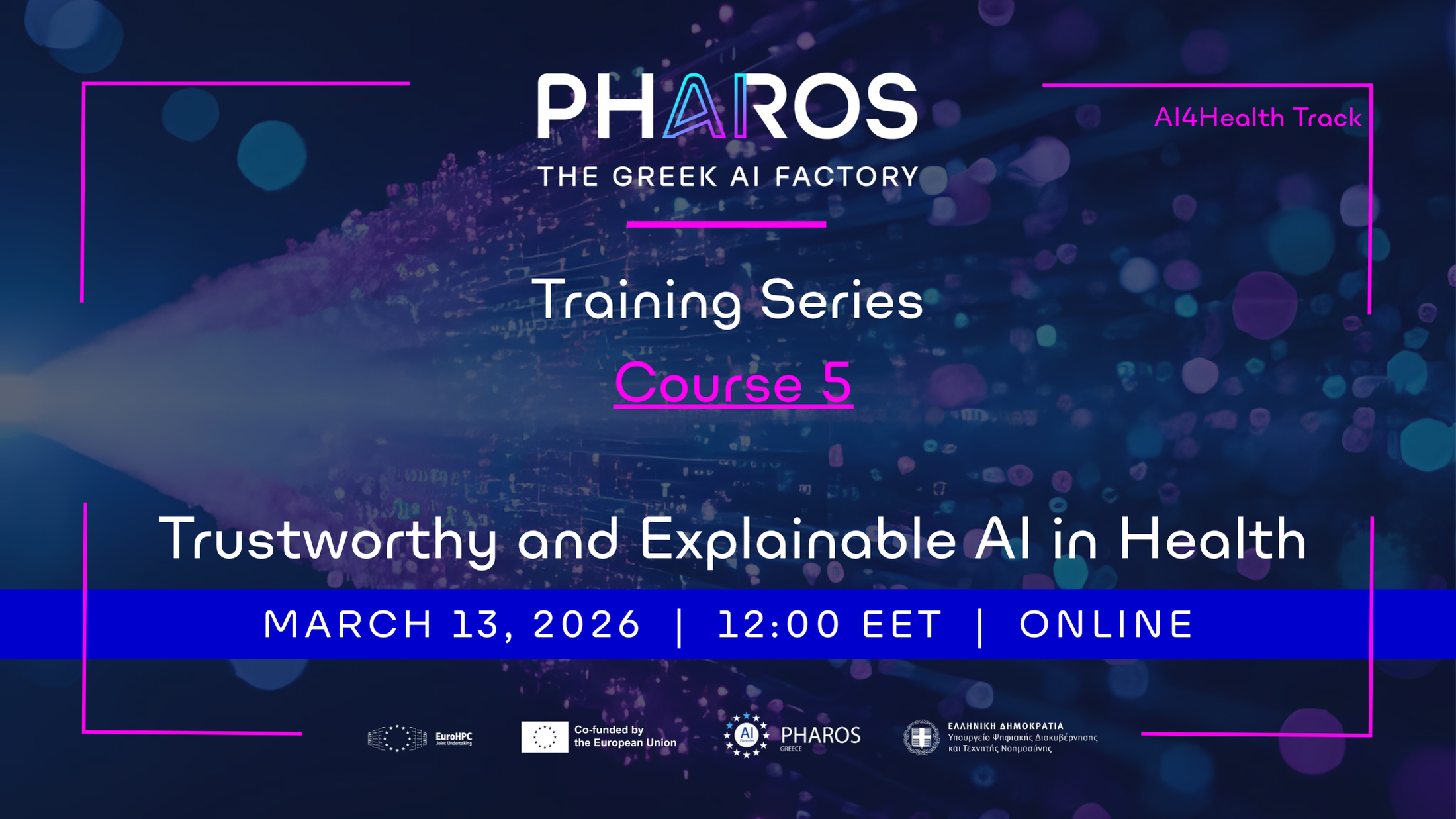

PHAROS AI Factory announces the 5th Course of its Training Series, under the title "Trustworthy and Explainable AI in Health", Track AI4Health, held online via Zoom.

Date: March 13th, 2026, at 12:00 EET

Location: Online via Zoom

Presentation Language: Greek

PART I: FUTURE-AI principles for Trustworthy AI

Specialisation: AI4Health

Module Description: The rapid adoption of Artificial Intelligence in critical sectors such as healthcare, industry, and public administration makes the development of trustworthy, transparent, and human-centric AI systems essential. The FUTURE-AI framework provides a comprehensive set of principles and practices for building Trustworthy AI throughout the entire AI lifecycle. This webinar offers an in-depth introduction to the FUTURE-AI Principles, covering six core dimensions: Fairness, Universality, Traceability, Usability, Robustness, and Explainability. Participants will explore how these principles translate into concrete technical, organizational, and governance practices, aligned with the EU AI Act and international AI standards. Through an expert-led presentation, real-world examples, and interactive case studies (with a strong focus on healthcare applications), the session bridges theory and practice. Particular emphasis is placed on trustworthiness by design, risk assessment, and compliance strategies for high-risk AI systems. The webinar is designed for professionals, researchers, and decision-makers who aim to design, evaluate, deploy, or procure AI systems responsibly, ensuring societal acceptance, regulatory compliance, and long-term sustainability.

Audience:

Suitable for:

- AI and Machine Learning researchers and engineers

- Data Scientists and AI Architects

- Healthcare professionals using AI systems

- Innovation managers and digital transformation leaders

- Policy makers, ethics officers, and compliance experts

- Graduate and PhD students

Learning Objectives:

By participating, attendees will:

- Gain a deep understanding of the FUTURE-AI principles

- Understand the relationship between Trustworthy AI and the EU AI Act

- Engage directly with experts on practical implementation challenges

- Connect theoretical principles to real-world AI use cases

- Apply FUTURE-AI concepts in professional or project-based contexts

Learning Outcomes:

After completion, participants will be able to:

- Explain the core principles of the FUTURE-AI framework

- Analyze risks and trust gaps in AI systems

- Assess whether an AI system meets Trustworthy AI requirements

- Identify compliance and governance steps for AI deployment

- Define next steps for further learning or implementation

Instructor's profile:

Haridimos Kondylakis is an Associate Professor of Big Data Engineering at the University of Crete and a collaborating researcher at the Institute of Computer Science, FORTH. His research focuses on big data management, semantic technologies, artificial intelligence, and AI applications in healthcare and other high-risk domains. He has extensive experience in EU-funded research projects and has published widely in leading international journals and conferences. His work emphasizes the responsible, trustworthy, and interoperable use of AI and he is one of the co-authors of the FUTURE-AI principles.

PART II: From Quantitative MRI to Explainable AI models: Applications in Cancer Imagin

Specialisation: AI4Health

Module Description:

Explainable AI models play a critical role in healthcare, particularly in medical imaging, where clinical decisions must be transparent, trustworthy, and accountable. Unlike black-box models (mainly deep learning methods), explainable approaches provide insights into how predictions are made, In medical imaging tasks such as tumor detection, disease classification, and image segmentation,explainable AI techniques help clinicians verify whether an AI system is focusing on medically relevant structures rather than misleading patterns. This interpretability not only increases clinician confidence and supports clinical decision-making, but also aids in model validation, bias detection, and generalization. In practice, model explainability is presented by highlighting image regions that most influenced a diagnosis or by quantifying the contribution of specific features. In conclusion, explainable AI bridges the gap between high-performing AI models and the need for safety, reliability, and generalizability in real-world healthcare settings.

Audience:

Suitable for:

- AI and Machine Learning researchers and engineers

- Data Scientists

- Healthcare professionals using AI systems

- Clinicians/Radiologists or medical techologists with enthusiasm in AI

- Graduate and PhD students

Learning Objectives:

By participating, attendees will understand:

- The principles of cancer imaging

- Basic Principles of MRI modeling and quantification

- Radi-(omics) based ML models / Fusion with quantitative MRI

- Explainability of radiology-based features

Learning Outcomes:

After completion, participants will be able to:

- Explain the principles of the cancer Imaging

- Identify MRI sequence/ protocol

- Train a Radiomic based ML model

- Explain radiological model outcomes

Instructor's profile:

George Ioannidis is a mathematician with a master's degree in Applied and Computational Mathematics from the Department of Mathematics and Applied Mathematics at the University of Crete. In 2020, he received his Ph.D. from the Medical School of the University of Crete in the radiology/medical physics domain. Currently, he works as a postdoctoral researcher at the Computational Bio-Medicine Laboratory of the Foundation for Research and Technology - Hellas (FORTH). Except from the research activity he also works as an adjunct lecturer at the department of Biomedical Sciences at University of west Attica.Furthermore, he was involved in various projects (Greek and European) such as (APOSIDI, Pro-CancerI, Radioval, Cardio-Care) as a medical image-analysis/Machine learning expert. His main interests include the modeling of biological processes from magnetic resonance and computed tomography imaging data for biomarker extraction, medical image and signal processing, and the development of numerical methods and machine learning algorithms for the creation of clinical applications.

Note: Please enter your institutional/corporate email when registering.